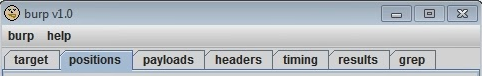

No need to introduce the incredible Burp Suite, THE ultimate tool for web pentests. 12 years ago the first version was born:

Cute wasn’t it ? It was more or less what you get in the Intruder tab now.

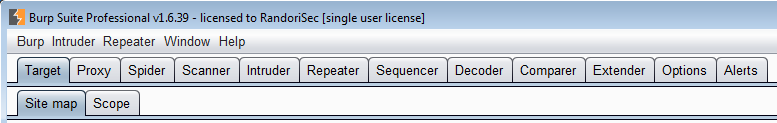

Today it’s a full toolbox:

However, having the best tool of the world is not useful if you don’t know how to work with it. A while ago, a client asked us to pentest their main web application. When they asked us what tools we used they were a bit disappointed when we answered “Burp Suite Pro”. In fact they were using it too and couldn’t find any vulnerability on their application with it. They thought that we wouldn’t be able to find any issues if we weren’t using +50k€ tools like WebInspect or AppScan. This is a very common misconception in IT Security (well not only…): costly tools will provide better protection. So we asked for their methodology and found that they were using Burp Suite like a point and click software: they launched it without a previous spidering and disregarding the session handling rules. We convinced them that we could try to find weaknesses with the same tool by spending a little more time configuring it and the job was done. Despite the fact that the application was pretty well done and using the same tool, we found a few serious vulnerabilities!

The equation could be given this way: Vulnerabilities lying in the application x tool x methodology x time = Discovered vulnerabilities So if any part on the left equals 0, the number of discovered vulnerabilities will always be 0 ! On the contrary, if you use a good tool, correctly and spend enough time on it, you will find vulnerabilities if there are any.

We don’t have a magical recipe to make the methodology part easy but we can give a few paths and quick-wins:

1. The basis

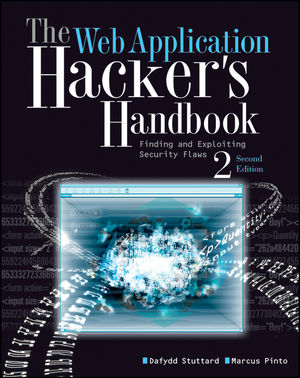

First one is clearly not a quick win but quite mandatory: read and follow the WAHH or OWASP pentest guide. Both if you can.

2. Lost in your webapp pentest ? Need fresh ideas/checks ?

Sometimes you are stuck in your pentest. You’ve already done many many checks and haven’t spotted any good vulnerability. Maybe you’ve missed an important check ? Following the WAHH checklist could help not overlooking important things. A very complete version is provided in the book (page 791 to 852 - yes, 61 full pages !) but if you don’t have the book you can use the summary list provided by MDSec.

Main steps are:

- Recon and analysis

- Test handling of access

- Test handling of input

- Test application logic

- Assess application hosting

- Miscellaneous tests

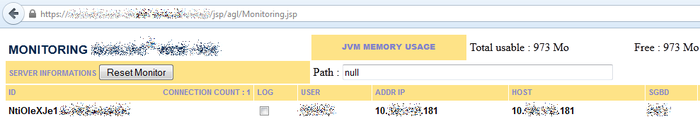

3.Good recon can sometimes spot the gold nugget

The process of spidering (aka crawling) will recursively visit every URLs found on the website to have an exhaustive view of every entry points. Scanning a website without previously spidering is a non-sense. You’ll scan only a small part of the whole thing. After a good (manual and automatic) spidering you can discover additional content using the automatic “Content Discovery” tool included in Burp Suite and using the “Intruder” with a good dictionary (here we use a highly modified wfuzz mixed with a good old dirbuster dictionary). Manual guessing can sometimes be rewarding: searching in the html and the js, especially in the comments, can lead to pages that were not crawled. Understanding the logic behind the filenames and the folders can lead to other “hidden” pages like this priceless monitoring page giving cookies of every active session ! Take any one and you’re authenticated.

Adding a debug/admin/verbose parameter can help too. The famous “admin=true” helped us once on a very sensitive application.

4. Session handling

If the website provides an authenticated part and you want to check vulnerabilities on it using Burp’s scanner you should take care of… the sessions ! Not doing so will lead to session loggout or access denied pages. And if you don’t tell Burp to handle that it will continue to scan and scan again hitting the wall for every new request. A waste of time. By default, Burp will monitor cookies received by the Spider and the Proxy and add them in its cookie jar. That means that if you log on the webapp using your browser and it goes through Burp Proxy, the cookie jar will be updated. Then the tools (eg: the Scanner) use the cookie jar to access the webapp so they are authenticated on the webapp like you on your browser. Macros can help handle cases where the webapp disconnect you after inactivity. Don’t forget the “Platform authentication” section if the server asks for Basic/Digest/NTLM authentication. Same thing for the “Client SSL Certificates” if the server asks for one.

5. Scanning

If you’ve followed carefully each step (it could take hours) you’re ready to dig into the scanner tab. Many options let you configure how the scan will be handled: how many threads will be used, which categories will be checked (SQLI, XSS, etc…), which parameters to avoid (eg: a specific cookie name), etc… A very common pitfall is related to the insertion points. Like the Burp help explains “an insertion point is a location within the request where attacks will be placed, to probe for vulnerabilities.”. By default Burp will fuzz up to 30 insertion points per request. So if typical request needs 31 and vulnerability is located in the insertion point n.31, which is not scanned, the vulnerability won’t be seen. On the following picture, you can see that many insertion points are missed. The scanner is configured to go up to 80 but the others are skipped. What if the issues involve these skipped insertion points ? You won’t see them.

Defining a huge value will allow to be comprehensive but it will also make the scan a lot slower so you should carefully configure this part.

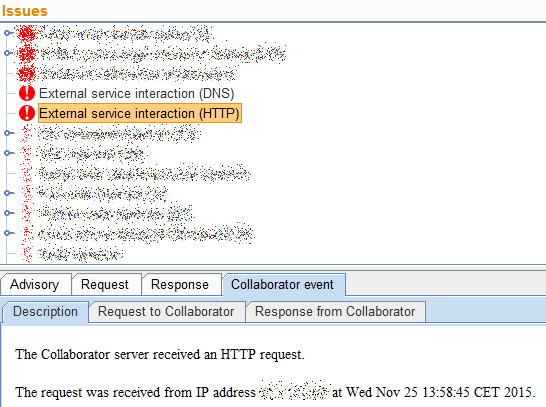

6. Collaborator

Introduced one year ago the Collaborator is a feature added to Burp that helps to detect vulnerabilities that don’t trigger changes in the application responses (eg: blind XSS and SSRF). Classical scanners won’t see these vulnerability classes. To detect them the collaborator listens for incoming DNS/HTTP/HTTPS and, when scanning, Burp tries to make the webserver connect to the collaborator. If the collaborator is hit, it is very likely that there is an issue. On many pentests, SSRF helps access internal resources and when the underlying OS is Windows it could lead to a very cool NetNTLM hash leakage.

That’s all for now !

We’ll soon post other tips here but in the meantime if you need training on Burp Suite feel free to ask us.

In summary:

- Read and follow the WAHH or OWASP pentest guide and their checklists

- Make a good (manual and automatic) spidering

- Discover additional content using the automatic “Content Discovery” tool and the “Intruder” with a good dictionary

- Discover additional content manually (in html/js, comments), by understanding the logic behind the filenames and the folders, by adding a debug/admin/verbose parameter

- Take care of authentication and session handling

- Configure the scanner, don’t miss important insertion points

- Don’t forget the collaborator